Are you struggling to gather all of a dormant website’s URLs for a redirect map or migration?

If you have worked in SEO for a while, you have probably been there before. Crawler restrictions, IT constraints or websites taken offline with no consideration to name but a few scenarios.

Although a full crawl of a domain synced with server logs, Google Analytics and Google Search Console and using a reputable crawler is best practice for any migration, there are some useful options available if this isn’t possible.

These will help you achieve an MVP (minimum viable product) migration at the very least, whilst you look to attack a best practice migration as quickly as possible via future phases and sprints.

Google Search Console

If you still have this verified, you can download all URLs that have been getting impressions for the last 16 months (depending on when the property was verified).

The easiest way to gather these in my opinion is by setting up a Google Data Studio table and exporting a CSV of all entries, as GSC itself can be a pain when looking to export all of the data especially when it’s a lot of data.

This should give you your most crucial URLs, but remember this is just Google data and there could well be pages with authority, that haven’t been getting search impressions for whatever reason (robots, noindex, canonicalisation etc). So you shouldn’t stop there.

There are also other tools available for warehousing your GSC data too, but you would have needed to set these up retrospectively, as they won’t backdate. More on that in a future article.

Wayback Machine

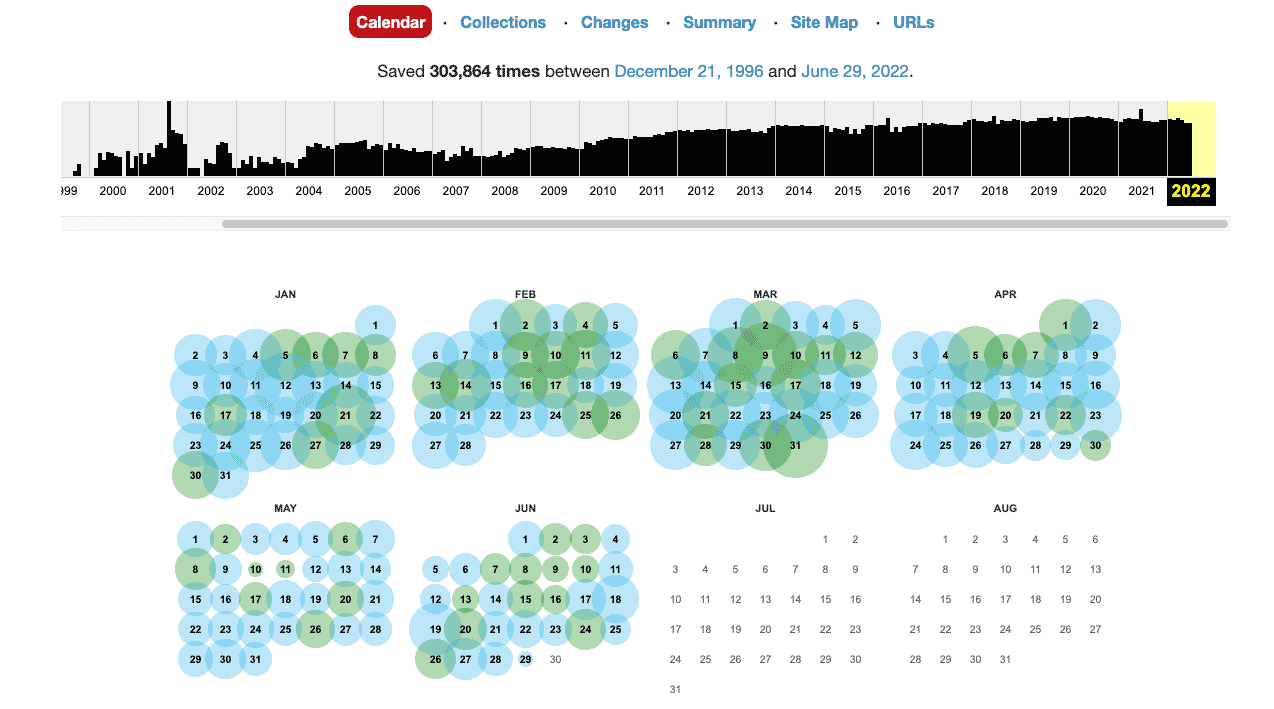

Powered by the Internet Archive at archive.org/web/ – this is a huge database of most websites’ historic URLs and also a good indication of how they have looked over the years too which can be useful.

There are some great hacks for pulling all of this data quickly (such as their own API), so you have historic URLs from previous site designs and structures. I’ve added my favourite from @dale here, but essentially this complements your GSC data nicely with data that, well, goes way back and could still have authority.

Server Log Files

If you are struggling with IT for the aforementioned reasons, then the chances are that retrieving server logs for the last year or so will be tricky.

But this is a super reliable way of seeing exactly which URLs have received bot hits from Google and other search engines, and therefore which are likely to be the most crucial for migration.

If you have had a tool such an OnCrawl, DeepCrawl or Botify set up then you should be golden, if not you may need to speak to someone in the IT or Dev team very nicely and cross your fingers.

If you are able to get hold of these, the data will likely need a lot of cleansing but this is the most comprehensive set of data available. You will need to use your intuition and experience to decide what makes it into your redirect map, starting with cross referencing against your other data sources.

Ahrefs

This tool is hot property right now. With its backlink profiling, content explorer, keyword research and link intersect capabilities, and even plans for its own search engine, it’s not hard to see why.

But this tool can be priceless if you are going into a migration without the ability to crawl all environments. You can of course see all URLs that have received backlinks which is key for any migration, but crucially you can also see response codes.

These can be vital post-migration, as they can help to establish any URLs that may have slipped through the net. Just sort by 4xx or even 5xx if you are having issues with IT support and you will see what you need to add to your next phase of implementation.

Critical URLs

These steps should help you to gather all of your critical URLs for migration if you are unable to crawl the domains in question effectively and mitigate any significant drop in SEO visibility.

You will however end up with a lot of URLs and there will be some jiggery-pokery needed in Excel to remove duplicates and get rid of erroneous ones. Look out for those you wouldn’t look to redirect such as parameter or filter pages, but this data should be most of the heavy lifting. Then you just need to redirect to the most relevant URL on your new or destination domain.

As with any SEO task, be sure to test and then test again and do plenty of checks post-launch.

The following steps should also help once you have your first phase completed:

Google Search Console (Again)

As you will notice, GSC is the holy grail for any migration and without it you will be struggling. Not least due to the ability to submit new URLs, domain names and XML Sitemaps.

When it comes to your redirect mapping, check for spikes in certain response codes such as 404s; as these are probably URLs that haven’t been caught and may need redirecting or re-establishing on the website.

Rank Tracker

Of course, the holy grail of any SEO migration or indeed, campaign. There will most likely be some flux for a week or so, but the best way to check if your migration has gone to plan is to track your rankings for key terms.

If certain categories or products just aren’t coming back, then you may need to look into why and this could be due to factors aside from your redirects.

SEO Visibility

Not a perfect SEO metric for a number of reasons, whether you use SEMRush, Ahrefs, Sistrix or another visibility tool. But these are very useful after Google Updates and Migrations, to check daily and at a glance if top-line organic visibility has been maintained.

You can then dig into the above tools to get a bit more forensic about what is driving your fluctuations.

There are of course a lot of other elements to consider for any migration and redirects are just one (albeit utterly crucial) of these. I’ll be sharing my SEO Migration Checklist soon, but hopefully this is helpful should you be in a tricky spot going into a migration.

If you enjoyed this article, why not follow us at Whitworth SEO?